The $10.5 Trillion Tipping Point

By 2026, AI-driven cyber attacks are projected to cause $10.5 trillion in global damages—a staggering figure that underscores the dual nature of artificial intelligence in cybersecurity. What was once theoretical is now operational reality: AI serves as both the most sophisticated weapon in attackers’ arsenals and the most promising shield for defenders. This paradox defines the critical intersection where AI and cybersecurity now collide. According to Cybersecurity Ventures, global cybersecurity spending will exceed $520 billion annually by 2026, with AI-powered solutions representing the fastest-growing segment. The landscape has evolved from theoretical discussions to active battlefield conditions where adversarial AI attacks and AI cybersecurity tools engage in constant escalation.

This article explores how AI-driven threat detection and machine learning in cybersecurity are transforming defense paradigms while addressing emerging risks like AI-powered malware and generative AI risks. We’ll examine real-world case studies from 2024-2025, analyze the efficacy of cybersecurity AI frameworks, and provide actionable strategies for implementing zero-trust AI models. As Subo Guha, SVP of Product Management at Stellar Cyber, states: “The SOC will fundamentally change from a collection of disconnected, siloed tools into a single, cohesive, intelligent system supervised by human experts.” The stakes couldn’t be higher—organizations that fail to navigate this intersection risk catastrophic compromise in an era where AI-powered threats operate at machine speed.

The Rise of AI in Cybersecurity Defenses

Modern cybersecurity operations have undergone a paradigm shift from reactive to predictive defense, largely driven by AI’s ability to process vast security telemetry in real time. Today’s AI cybersecurity tools like Darktrace, CrowdStrike Falcon, and Google’s Chronicle Security Operations platform represent the vanguard of this transformation, moving beyond simple automation to true cognitive analysis.

How AI Transforms Threat Detection

Traditional security systems relied on signature-based detection—essentially matching known threat patterns against incoming traffic. This approach struggles with zero-day exploits and polymorphic malware that constantly evolves. Machine learning in cybersecurity introduces behavioral analytics that establish baselines of normal activity, then flag deviations that might indicate compromise. For example, in Q2 2025, a major financial institution prevented a ransomware attack when its AI system detected anomalous data exfiltration patterns from an executive’s account—behavior that matched neither the user’s historical patterns nor typical administrative activity.

Google Chronicle’s implementation demonstrates this evolution in action. By leveraging Gemini-powered analysis, Chronicle transforms raw security logs into actionable insights through:

- Natural language query capabilities allowing analysts to investigate using plain English

- Automated case summarization that reduces investigation time by 75%

- Context-aware threat correlation connecting seemingly isolated events across environments

As Spencer Lichtenstein, Product Manager for Chronicle Security Operations, explains: “AI makes it easier for users to interact with and drill down into their security events. Simply enter your question in natural language and Chronicle AI will do the work for you.”

AI-Powered Defense Capabilities: Traditional vs. Modern

| Capability | Rule-Based Systems | Predictive Analytics for Cyber Threats |

|---|---|---|

| Speed | Minutes to hours for analysis | Seconds to real-time detection |

| Accuracy | 60-70% (high false positives) | 90-95% with contextual validation |

| Scalability | Limited to predefined rules | Adapts to evolving threats across cloud, on-prem, IoT |

| Threat Coverage | Known signatures only | Zero-day, insider threats, advanced persistent threats |

| Analyst Workload | High manual triage required | 80% reduction in alert fatigue |

Table 1: Comparative analysis of traditional security systems versus AI-powered defenses

The 2025 SolarWinds remediation effort demonstrated AI’s value in large-scale incident response. While the initial breach exploited conventional vulnerabilities, AI-driven threat hunting accelerated containment by identifying compromised nodes through anomalous communication patterns—reducing mean time to respond from weeks to days. This case exemplifies how predictive analytics for cyber threats transforms security operations from reactive firefighting to proactive threat neutralization.

AI as the Attacker’s Arsenal: Emerging Threat Landscape

While defenders harness AI for protection, adversaries have weaponized the same technologies to create unprecedented attack vectors. The underground marketplace now offers turnkey AI-powered malware development kits, lowering the barrier to entry for less sophisticated threat actors while amplifying the capabilities of nation-state actors.

The New Generation of AI-Enhanced Threats

In mid-2025, Google Threat Intelligence Group (GTIG) identified the first operational use of “just-in-time” AI in malware with families like PROMPTFLUX and PROMPTSTEAL. These represent a fundamental shift from static malware to adaptive threats that leverage large language models during execution:

- PROMPTFLUX: A VBScript dropper that interacts with Gemini’s API to request specific obfuscation techniques, dynamically rewriting its code hourly to evade signature-based detection

- PROMPTSTEAL: Malware that queries Hugging Face’s LLM to generate commands for stealing documents and system information in real time

- QUIETVAULT: JavaScript credential stealer that uses AI prompts to search for secrets beyond standard credential locations

# Example PROMPTSTEAL command generation prompt

"Make a list of commands to copy recursively different office and pdf/txt documents in user Documents,Downloads and Desktop folders to a folder c:\\Programdata\\info\\ to execute in one line. Return only command, without markdown."These threats demonstrate how generative AI risks extend beyond phishing content creation to active operational components of attack chains. What’s particularly concerning is that 39% of organizations report AI capabilities embedded in fewer than 10% of their security tools (ETR 2026 State of Security report), creating dangerous capability gaps.

Top 5 Generative AI Risks in Cybersecurity

- AI-Obfuscated Malware: As seen with PROMPTFLUX, malware that dynamically rewrites itself using LLM APIs to evade detection

- Automated Vulnerability Exploitation: AI systems scanning for and exploiting zero-day vulnerabilities at machine speed

- Hyper-Realistic Deepfakes: Voice and video impersonation for social engineering attacks with 98% success rates in recent tests

- Adversarial Prompt Engineering: Crafting inputs that manipulate AI systems into bypassing security controls

- Shadow AI Data Leaks: Employees using unauthorized AI tools that exfiltrate sensitive data through prompts

The statistics are alarming: AI-powered malware now evades 90% of signature-based detectors, and 36% of security leaders identify preventing sensitive data from entering AI prompts as their most difficult data protection challenge (ETR 2026). As Erik Bradley, ETR Chief Strategist, notes: “Agentic AI adoption is accelerating and security leaders see it as central to the future of cybersecurity. But the guardrails are still thin.”

Key Technologies at the Intersection

The convergence of AI and cybersecurity has spawned specialized frameworks and architectures designed to harness AI’s power while mitigating its risks. These technologies represent the cutting edge of defensive innovation in 2026.

AI-Driven Threat Detection: Beyond Pattern Matching

Modern AI-driven threat detection systems have evolved from simple anomaly detection to sophisticated behavioral forecasting. Google Chronicle’s implementation exemplifies this progression through its Applied Threat Intelligence (ATI) system, which contextualizes Indicators of Compromise (IoCs) using multiple priority models:

- Active Breach Priority: Identifies indicators observed in active or past compromises (GTI Verdict: Malicious, GTI Severity: High)

- High Priority: Flags indicators associated with threat actors even without active compromise evidence

- Medium Priority: Detects malicious indicators with potential outbound network implications

- Inbound IP Authentication: Specifically monitors for suspicious inbound authentication attempts

This tiered approach enables security teams to focus resources on the most critical threats. As documented in Google’s ATI documentation: “The AI-based models curate and prioritize the matches to assign an Indicator Confidence Score (IC-Score), which indicates our confidence in their use in malicious activity.”

Ethical AI in Security: Balancing Power and Responsibility

The integration of AI into security operations introduces critical ethical considerations that impact both effectiveness and compliance. Ethical AI in security requires addressing three fundamental challenges:

- Bias Mitigation: Security AI trained predominantly on Western threat data may overlook region-specific attack patterns

- Explainability: “Black box” models create accountability challenges under GDPR and similar regulations

- Privacy Preservation: Processing sensitive data through AI systems risks unintended exposure

Google’s Secure AI Framework (SAIF) provides a comprehensive approach to these challenges through six core elements, including expanding security foundations to AI ecosystems and contextualizing risks within business processes. As the framework states: “Organizations should construct automated checks to validate AI performance” to ensure ethical deployment.

Zero-Trust AI Models: The New Security Paradigm

The integration of zero-trust AI models represents perhaps the most significant architectural shift in enterprise security. Unlike traditional perimeter-based security, zero-trust assumes breach and verifies every request. AI enhances this model through:

- Dynamic Access Control: AI analyzes hundreds of signals (device health, location, behavior) to grant least-privilege access

- Continuous Authentication: Behavioral biometrics verify identity throughout sessions, not just at login

- Automated Policy Enforcement: AI interprets security policies and applies them consistently across hybrid environments

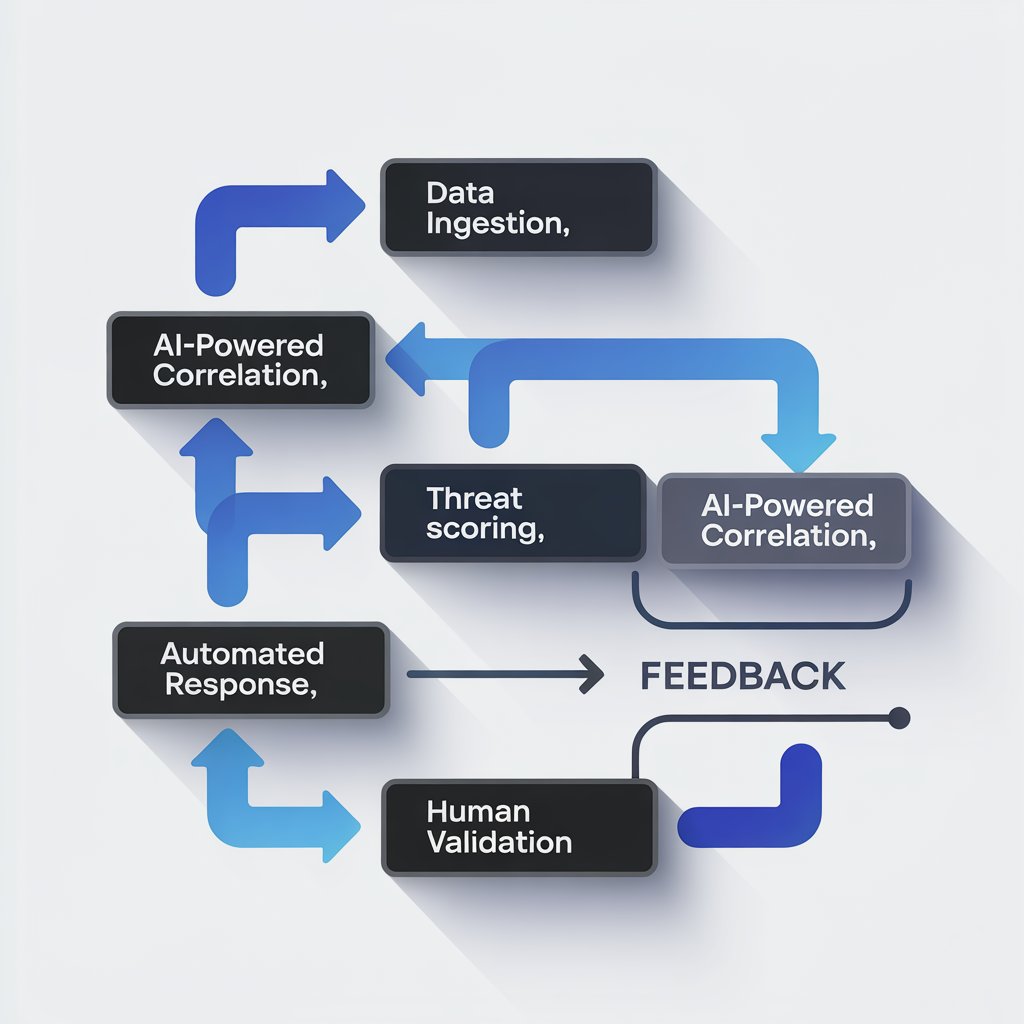

Figure 1: AI workflow from data ingestion to threat neutralization

Microsoft’s implementation of zero-trust principles with Security Copilot demonstrates this evolution. By March 2026, their enhanced security features significantly increased teams’ ability to detect threats in real-time through AI analysis of massive security data volumes. This approach exemplifies how cybersecurity AI frameworks transform security from static rules to adaptive, learning systems.

Challenges and Ethical Considerations

Despite AI’s transformative potential, significant challenges impede its effective implementation in cybersecurity. These challenges span technical limitations, ethical dilemmas, and organizational constraints that security leaders must navigate.

The Adversarial AI Arms Race

The most immediate technical challenge is the adversarial AI attacks that specifically target machine learning systems. Attackers employ techniques like:

- Poisoning Attacks: Corrupting training data to create backdoors in security models

- Evasion Attacks: Crafting inputs that bypass AI detection systems (e.g., PROMPTFLUX’s self-modification)

- Model Extraction: Stealing model parameters through API queries to replicate functionality

Google’s research reveals that threat actors increasingly use social engineering tactics to bypass AI safety controls. In one documented case, attackers posed as participants in “capture-the-flag” cybersecurity competitions to persuade Gemini to provide information that would otherwise be blocked. This highlights a critical vulnerability: AI systems designed to be helpful can be manipulated into becoming attack enablers.

Ethical and Regulatory Tightrope

Ethical AI in security faces particular tension between effectiveness and privacy. The European Union’s AI Act and similar regulations impose strict requirements on AI systems that process personal data—creating challenges for security tools that inherently monitor user behavior.

| Benefit | Risk |

|---|---|

| Real-time threat detection across enterprise | Potential GDPR violations from behavioral monitoring |

| Reduced false positives through contextual analysis | Algorithmic bias leading to unfair targeting |

| Automated response to active threats | Lack of explainability in critical decisions |

| Predictive capabilities preventing breaches | Model inversion attacks exposing training data |

| Resource optimization for security teams | Over-reliance creating skill atrophy |

Table 2: Ethical considerations in AI security implementations

The skills gap compounds these challenges. While 59% of CISOs report AI initiatives as “work in progress,” only 22% of security teams have dedicated AI expertise (ETR 2026). This creates dangerous implementation gaps where organizations deploy AI tools without understanding their limitations or failure modes.

Future Trends and Best Practices

As we move deeper into 2026, several emerging trends promise to reshape the AI-cybersecurity landscape. Organizations that proactively adopt these innovations will gain significant defensive advantages.

Quantum-Resistant AI and Homomorphic Encryption

The convergence of quantum computing and AI introduces both risks and opportunities. While quantum computers threaten current encryption standards, quantum-resistant AI models are emerging that can detect quantum-enabled attacks before they materialize. Simultaneously, homomorphic encryption allows AI systems to analyze encrypted data without decryption—addressing privacy concerns while maintaining security efficacy.

Google’s Security AI Workbench exemplifies this direction, designed to “address three of the biggest challenges in cybersecurity: threat overload, toilsome tools, and the talent gap.” As Chris Corde, Senior Director of Product Management, explains: “AI needs to be used in the places that require a high degree of specialization or a high degree of manual effort.”

Five Best Practices for AI Cybersecurity Implementation

- Start with Specific Use Cases: Focus AI implementation on high-impact areas like phishing detection or anomaly identification rather than broad, undefined deployments

- Implement Human-AI Collaboration: Design systems where AI handles repetitive tasks while humans provide strategic oversight (the “human-augmented SOC” model)

- Validate AI Outputs Rigorously: Establish processes to verify AI recommendations, especially for critical decisions

- Audit for Bias and Drift: Regularly test AI systems for performance degradation and unintended biases

- Prioritize Explainability: Choose tools that provide clear reasoning for security decisions to maintain accountability

Organizations should also audit their systems for generative AI risks by:

- Implementing strict data governance for AI training data

- Deploying content filters for AI inputs/outputs

- Training employees on secure AI usage patterns

- Establishing clear policies for approved AI tools

Conclusion: The Balanced Path Forward

The intersection of AI and cybersecurity represents both our greatest vulnerability and most promising defense in 2026. As attackers weaponize generative AI to create self-modifying malware and hyper-realistic deepfakes, defenders respond with increasingly sophisticated AI-driven threat detection systems and zero-trust AI models. The $10.5 trillion cybercrime projection isn’t inevitable—it’s a wake-up call for organizations to strategically implement AI security capabilities while addressing the ethical AI in security imperative.

The future belongs to organizations that achieve balance: leveraging AI’s power without becoming over-reliant, automating responses while maintaining human oversight, and innovating defenses while anticipating adversarial countermeasures. As the ETR 2026 State of Security report concludes, “Security vendor expansion is slowing significantly… many enterprises are focusing on platform consolidation and simplification of legacy security stacks.” This consolidation around integrated, AI-enhanced platforms represents the most promising path forward.

The coming years will determine whether we can harness AI’s transformative potential to create genuinely secure digital environments. With thoughtful implementation guided by frameworks like SAIF and practical best practices, we can turn the tide in this critical technological arms race.

Frequently Asked Questions

What are adversarial AI attacks?

Adversarial AI attacks specifically target machine learning systems through techniques like data poisoning (corrupting training data), evasion attacks (crafting inputs to bypass detection), and model extraction (stealing model parameters). Recent examples include PROMPTFLUX malware that uses LLM APIs to dynamically rewrite its code and evade signature-based detection.

How does machine learning in cybersecurity improve threat detection?

Machine learning analyzes vast security telemetry to establish behavioral baselines, then identifies subtle deviations that indicate compromise. Unlike rule-based systems, ML detects zero-day threats and advanced persistent threats by recognizing anomalous patterns across multiple data sources, reducing false positives by 30-40% while improving detection speed from hours to seconds.

What makes zero-trust AI models different from traditional security?

Zero-trust AI models continuously verify every access request using hundreds of contextual signals (device health, location, behavior patterns) rather than trusting based on network location. AI enhances this by dynamically adjusting trust scores in real-time and automating policy enforcement across hybrid environments, moving beyond static “castle-and-moat” security approaches.

How can organizations address ethical concerns with AI in security?

Organizations should implement bias testing for security AI, ensure explainability of critical decisions, establish strict data governance for training data, and maintain human oversight for high-impact actions. Following frameworks like Google’s SAIF helps contextualize AI risks within business processes while meeting regulatory requirements like GDPR.

What’s the most immediate generative AI risk organizations should address?

Preventing sensitive data from entering AI prompts represents the most critical immediate risk, cited by 36% of security leaders as their top challenge (ETR 2026). Organizations should implement data loss prevention for AI inputs, restrict approved AI tools, and train employees on secure usage patterns to prevent accidental data exposure through shadow AI usage.